Understanding Pipe and Filter Architecture in AI Modeling

Discover how the Pipe and Filter architecture transforms AI modeling by organizing complex workflows into modular, manageable stages. From preprocessing to model inference and post-processing, this approach enhances scalability, debugging, and reusability. Learn how it simplifies AI systems, handles diverse data, and meets modern challenges. Dive deeper into its benefits now!

SOFTWARE ARCHITECTURE

Dr Mahesha BR Pandit

6/16/20243 min read

Understanding Pipe and Filter Architecture in AI Modeling

In the world of software architecture, the Pipe and Filter design pattern has proven to be a versatile and effective approach for building modular and scalable systems. When applied to AI modeling, it becomes even more impactful, offering a structured way to handle complex data processing pipelines while ensuring each step remains independently manageable.

What is Pipe and Filter Architecture?

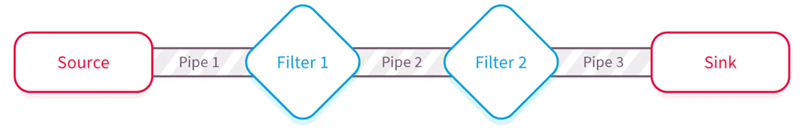

At its core, the Pipe and Filter architecture organizes a system into a series of processing components (filters) that are connected by communication channels (pipes). Each filter performs a specific transformation or computation on the data it receives and passes the processed data to the next filter in the pipeline. This separation of concerns makes it easier to maintain, extend, and reuse individual components.

In AI systems, this pattern is particularly useful because data often needs to go through multiple stages of preprocessing, feature extraction, model inference, and post-processing. By breaking these stages into discrete filters, the architecture provides clarity and modularity, which are essential in managing the complexity of AI workflows.

How Pipe and Filter Works in AI Modeling

Imagine an AI system designed to classify images of fruits. The data journey begins with raw image input and progresses through a series of well-defined transformations. First, a filter might resize the image to a standard dimension. Another filter might normalize the pixel values, followed by a filter that extracts features such as edges or textures. Each step outputs data in a format expected by the next stage, creating a seamless flow.

After preprocessing, the data reaches the model inference stage, which can also be structured as a series of filters. For example, one filter might perform a convolutional operation, while another aggregates the results to produce a final prediction. Finally, post-processing filters might convert the prediction into a human-readable format or generate a confidence score.

The beauty of this approach lies in its modularity. If an improved image normalization algorithm becomes available, you only need to replace the normalization filter without affecting other components of the pipeline.

Advantages of Pipe and Filter in AI Systems

The Pipe and Filter architecture aligns naturally with the iterative and experimental nature of AI development. Each filter can be developed, tested, and optimized independently, reducing the risk of errors propagating across the system. This independence also allows teams to work in parallel, speeding up the development process.

Moreover, the architecture promotes reusability. Filters created for one AI model can often be adapted for other models with minimal changes. For instance, a filter designed to extract edges from an image can be reused in various computer vision tasks.

Another advantage is the ease of debugging and monitoring. When something goes wrong, it is easier to pinpoint the issue within a specific filter rather than sifting through an entire monolithic codebase. This characteristic is particularly valuable in AI systems, where data anomalies and edge cases are common.

Challenges and Considerations

While Pipe and Filter brings numerous benefits, it is not without challenges. Performance can be a concern, especially in AI systems requiring real-time processing. Each filter introduces a potential bottleneck, and data needs to flow efficiently between stages to maintain high throughput.

Another consideration is data format compatibility between filters. Each stage must output data in a form that the subsequent stage understands. Poorly designed interfaces can lead to integration headaches and unexpected errors.

Finally, designing filters that are both independent and cohesive requires careful planning. Filters must strike a balance between generality (to maximize reusability) and specificity (to perform their task effectively).

Real-World Applications in AI Modeling

Pipe and Filter architecture is not just a theoretical concept; it has been successfully applied in real-world AI systems. For instance, natural language processing models often rely on pipelines to process text data. A typical pipeline might include tokenization, stemming, stop-word removal, and feature vectorization before the data reaches a machine learning model.

In another example, autonomous vehicles employ similar architectures for sensor data processing. Raw inputs from cameras, LiDAR, and radar sensors pass through filters for calibration, noise reduction, and feature extraction before being analyzed for decision-making.

These examples highlight the versatility of Pipe and Filter in handling diverse data types and workflows.

Final Thoughts

The Pipe and Filter architecture offers a structured and modular approach to building AI systems. It simplifies the handling of complex workflows, encourages reusability, and makes debugging more manageable. By breaking down an AI pipeline into discrete, well-defined stages, developers gain greater control over the system's behavior and performance.

In a field as dynamic and complex as AI, adopting principles like Pipe and Filter can make a significant difference. It is not merely about organizing code—it is about building systems that are adaptable, maintainable, and robust enough to meet the challenges of modern AI applications.

Image Courtesy: NIX (https://nix-united.com/blog/10-common-software-architectural-patterns-part-1/)