Feature Selection Problems: Navigating the Maze of Data Relevance

Dive into the challenges of feature selection in machine learning, where choosing the right data variables can make or break your model. Explore practical examples like predicting loan defaults, uncover common pitfalls, and learn how to balance automated tools with human expertise. Click to unravel the art of feature selection.

ARTIFICIAL INTELLIGENCE

Dr Mahesha BR Pandit

8/25/20243 min read

Feature Selection Problems: Navigating the Maze of Data Relevance

In the world of machine learning, the quality of a model is often tied to the quality of the data it is trained on. But not all data features contribute equally to a model's success. Feature selection—the process of identifying the most relevant variables for a given problem—is both an art and a science. When done poorly, it can lead to inefficiencies, overfitting, or even outright failure of the model. Understanding the challenges of feature selection is crucial for anyone working with data.

Why Feature Selection Matters

Imagine you are building a machine learning model to predict house prices. Your dataset contains dozens of features: the number of bedrooms, the square footage, the neighborhood, the age of the house, and even seemingly unrelated variables like the weather on the day the listing was created. While some of these features are highly relevant, others may add noise or redundancy, diluting the model's performance. Choosing the right features is the key to making accurate predictions.

Feature selection impacts not only the model's accuracy but also its interpretability and efficiency. A model with fewer, more relevant features is faster to train and easier to understand. On the other hand, including irrelevant or redundant features can lead to overfitting, where the model learns patterns that do not generalize well to new data.

The Challenge of Too Many Features

One of the most common problems in feature selection arises when there are too many features in a dataset. In domains like genomics or text analysis, datasets can easily contain thousands or even millions of features. Identifying which ones matter can feel like searching for a needle in a haystack.

This problem is exacerbated by the "curse of dimensionality," a phenomenon where adding more features increases the complexity of the model but often reduces its predictive power. In high-dimensional datasets, the distance between data points becomes less meaningful, making it harder for the model to distinguish between classes or patterns. Feature selection helps combat this by reducing the dimensionality of the data.

Practical Example: Predicting Loan Defaults

Consider a practical problem faced by financial institutions: predicting whether a borrower will default on a loan. A dataset for this task might include features like income, credit score, employment history, and loan amount, alongside dozens of other variables such as the borrower's zip code, marital status, or even the number of dependents.

Some features, like credit score and income, are intuitively relevant. Others, like zip code, may have hidden correlations with default rates but are not directly causal. Including irrelevant features can mislead the model, while excluding relevant ones may result in lost predictive power. Moreover, some features may interact with each other, complicating the selection process further.

In this case, feature selection techniques such as recursive feature elimination, information gain, or Lasso regression can help identify the most impactful variables. However, even with these tools, the process requires domain knowledge and careful validation to ensure that the selected features truly enhance the model's performance.

Common Pitfalls in Feature Selection

Feature selection is not without its pitfalls. One of the most significant challenges is over-reliance on automated methods. Algorithms like mutual information or correlation coefficients can rank features based on statistical measures, but they do not account for the context or the specific needs of the model. Blindly trusting these rankings can lead to suboptimal results.

Another challenge is dealing with multicollinearity, where multiple features are highly correlated with each other. For example, in the loan default dataset, income and debt-to-income ratio might both be strongly predictive but redundant when used together. Feature selection must account for such redundancy to avoid unnecessary complexity.

There is also the risk of underfitting when too few features are selected. Simplifying the model by excluding important variables can lead to poor performance, as the model fails to capture critical patterns in the data.

Balancing Art and Science

Feature selection is as much about judgment as it is about algorithms. While statistical methods and tools can guide the process, the final decisions often require domain expertise and iterative experimentation. Understanding the context of the problem, the relationships between features, and the behavior of the model is essential.

In practice, feature selection is rarely a one-time task. As new data becomes available or the problem evolves, features may need to be reevaluated and adjusted. The process is iterative, requiring constant refinement to align the data with the goals of the model.

The Path Forward

Feature selection is a foundational step in building effective machine learning models, but it comes with its share of challenges. By understanding the nuances of the process and the common pitfalls, data scientists can make better decisions and create models that are not only accurate but also interpretable and efficient.

The next time you work on a dataset, remember that the features you choose—or overlook—can make all the difference. Feature selection is not just about narrowing down options; it is about uncovering the true story that your data has to tell.

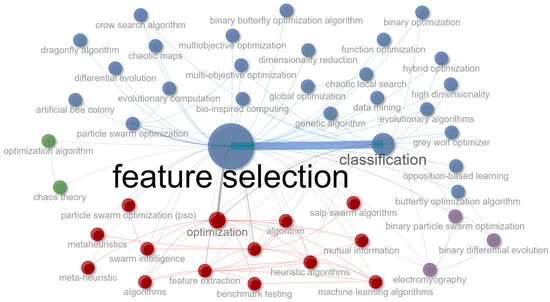

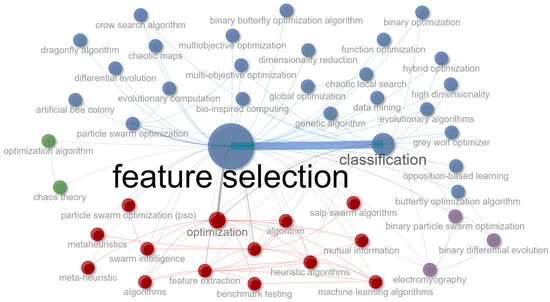

Image Courtesy: MDPI, https://www.mdpi.com/2313-7673/9/1/9